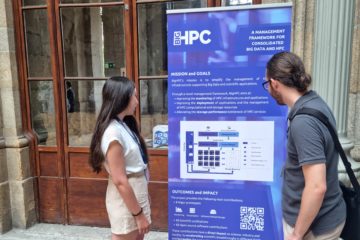

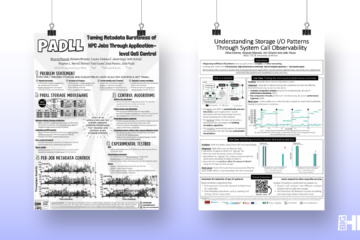

BigHPC project comes to an end

Three years ago, partners from the two sides of the ocean gathered with a problem in mind: HPC infrastructures have been increasingly sought to support Big Data applications, whose workloads significantly differ from those of Read more…